Why 'use the best model' is incomplete advice

The best model for a given task depends on two things: what the task requires and what data the task involves. Most AI tool decisions optimize only for the first factor.

When sensitive customer data routes to a third-party model because that model is more capable, the business has implicitly made a privacy decision — usually without examining it.

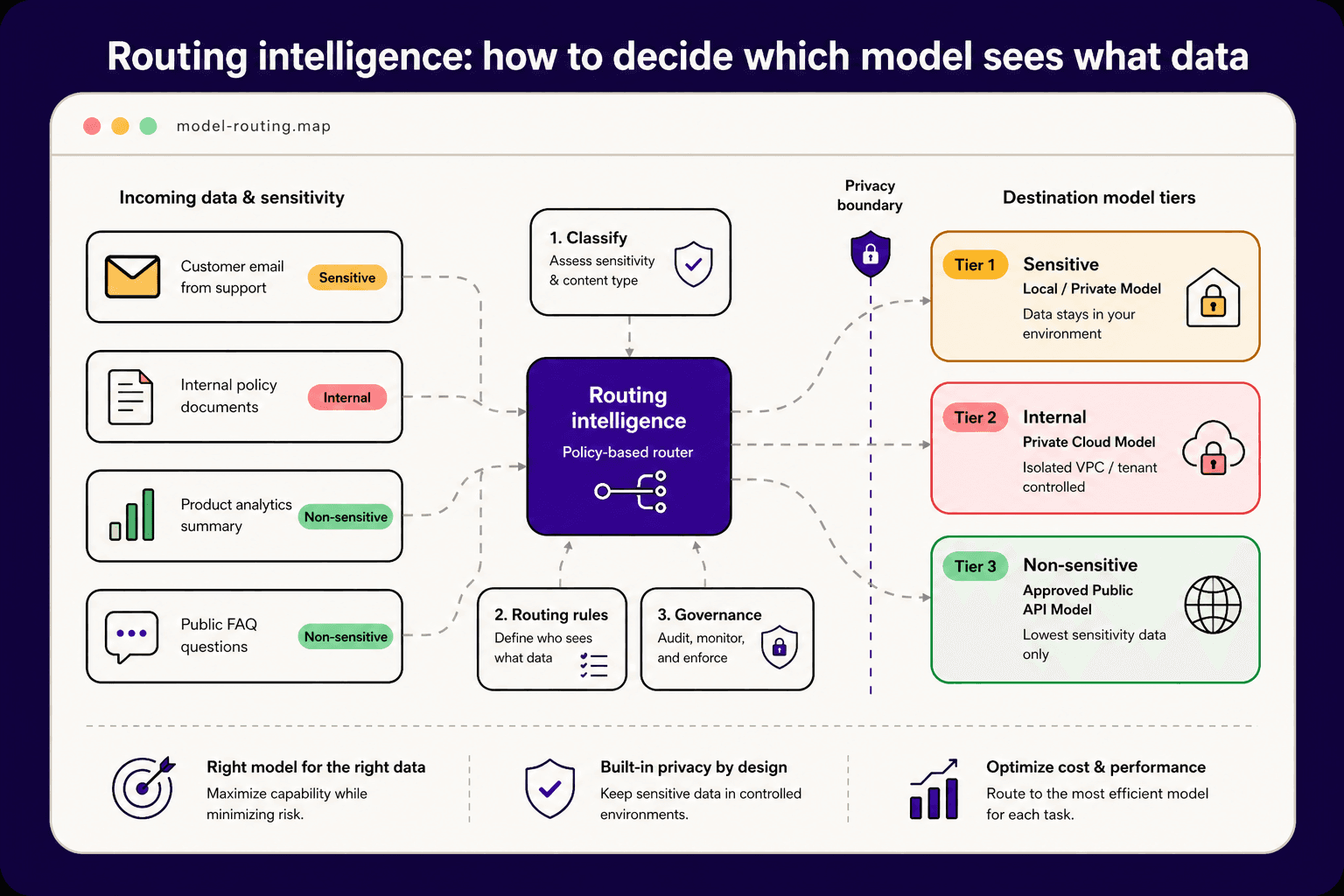

A practical data classification system

Tier 1 — Sensitive: Customer PII, financial records, employee data, supplier pricing, contractual terms. This data should never leave an environment the business controls.

Tier 2 — Internal: Internal communications, operational data, non-customer business context. This data can route to client-hosted private models but should not go to shared inference infrastructure.

Tier 3 — Non-sensitive: Publicly available context, product descriptions, general research tasks. This data can route to any approved external model without meaningful privacy risk.

Building the routing layer

A routing layer intercepts each AI task before execution and applies classification logic: what tier does the input data belong to? What model tier is authorized for that classification? Route accordingly.

This is not a theoretical architecture — it is implementable today with orchestration tools that sit between a business's data and its AI providers. The key is making the routing explicit and auditable rather than implicit and invisible.

Performance versus privacy: the false trade-off

The concern with private model routing is usually performance: private models are slower and less capable than frontier public models. This was more true two years ago than it is today.

Modern private deployment options — locally hosted open-weight models, client-controlled cloud inference, and fine-tuned domain models — have closed most of the performance gap for the specific tasks businesses actually automate.

Making routing decisions durable

Routing decisions made at the infrastructure level survive model upgrades, provider changes, and policy updates. Routing decisions made at the application level require re-examination every time something changes.

Building the routing layer as infrastructure rather than application logic is the decision that makes an AI system governable at scale.

Ready to act?