The compliance collision

Healthcare, legal, financial services, government contracting, and other regulated industries have specific rules about where data can be processed, stored, and transmitted. These rules often predate AI by decades — and they apply to AI just as they apply to any other data processing system.

A law firm that cannot store client communications on shared infrastructure cannot route client data through a third-party AI model. A healthcare practice that operates under patient data protection rules cannot send clinical notes to a public inference endpoint. These are not edge cases — they are the standard operating conditions for entire industries.

Why 'the vendor is compliant' is not enough

Many AI tool vendors have compliance certifications for their infrastructure. This covers the vendor's storage and transmission practices, but it often does not cover how the data is used for model training, what happens to data in the inference pipeline, or what sub-processors the vendor uses.

A compliance certification at the storage layer does not automatically extend to the AI processing layer. The legal team that signed off on the cloud storage vendor did not necessarily sign off on the AI model vendor that now processes data from that storage.

What private deployment resolves

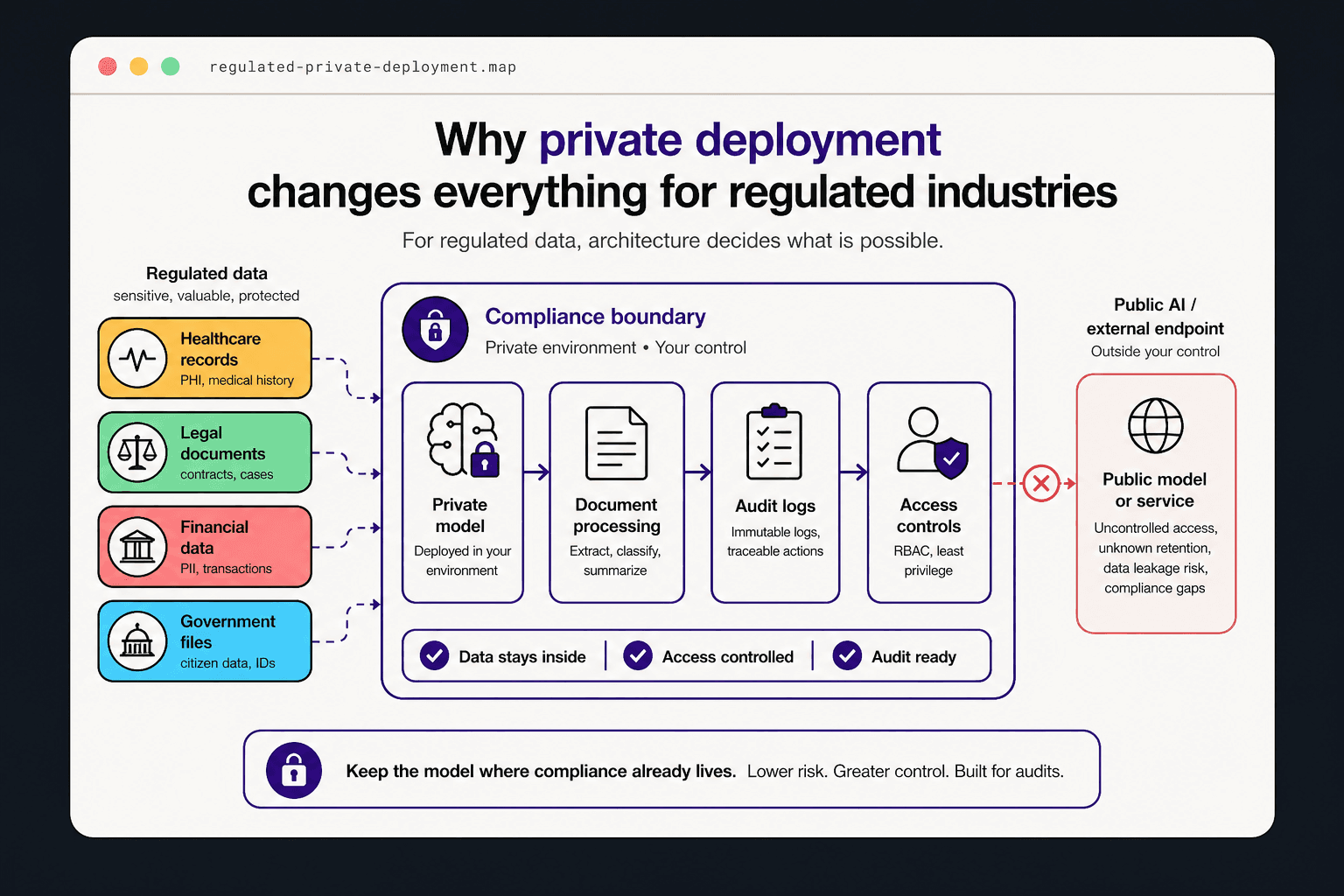

When an AI model runs on infrastructure the business controls — whether on-premises, in a private cloud, or in a client-owned account — the data never leaves the compliance boundary.

This is not a workaround or an accommodation — it is the architecturally correct approach for any regulated data category. It makes the compliance conversation simple: the data stayed inside the environment that was already approved for it.

Performance in private environments today

The objection to private deployment in regulated industries used to be performance: open-weight models available for private deployment were significantly less capable than frontier models.

That gap has narrowed substantially. For the specific tasks that regulated businesses automate — document classification, structured data extraction, report generation, routing — current private-deployable models perform well enough to produce production-quality results.

The audit conversation you want to be ready for

When a regulator, a client's legal team, or an internal compliance officer asks how your AI workflows handle regulated data, the strongest answer is a clear architecture diagram showing exactly what data the AI processes, where the processing happens, and what controls are in place.

Businesses that build private AI infrastructure have that answer ready. Businesses that chose the fastest deployment path often discover their answer during the audit instead of before it.

Ready to act?