What kills most AI pilots

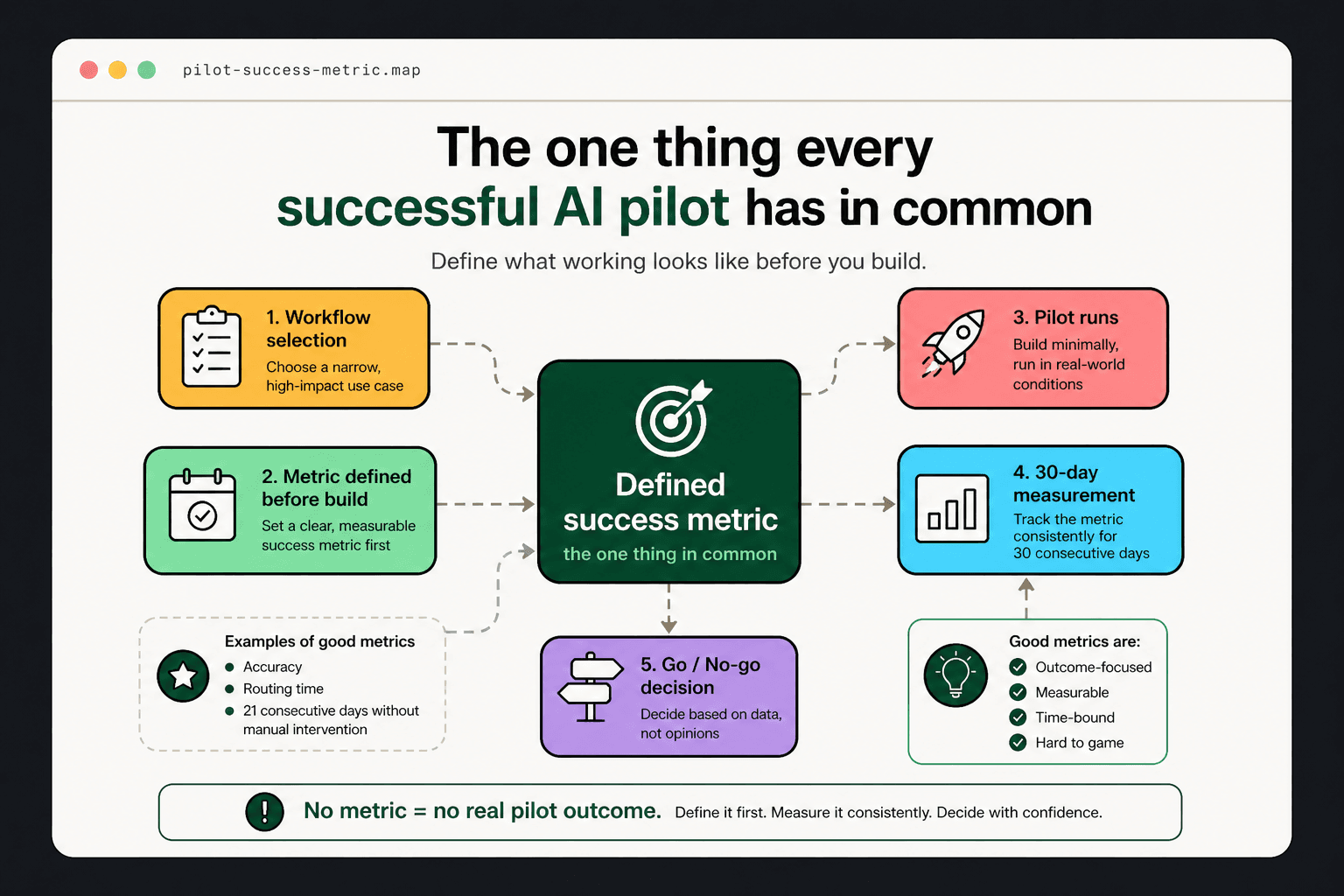

Most AI pilots do not fail because the technology does not work. They fail because nobody agreed on what 'working' looks like before the project started.

Without a clear success criterion, the pilot runs, produces output, and then enters an indefinite evaluation phase where everybody has a different opinion about whether it is good enough. That ambiguity erodes confidence, delays decisions, and often ends with the project quietly shelved.

What a good success metric looks like

A good pilot success metric is specific, measurable within 30 days, and does not require the people who built the automation to explain why it counts.

Examples: 'Support ticket classification accuracy above 92% on a sample of 200 tickets.' 'Average time from ticket submission to routing under 4 minutes, down from 2.5 hours.' 'Order exception report generated and delivered by 8am daily without manual intervention for 21 consecutive days.'

Why the metric needs to exist before the build

Setting the metric after the pilot ships allows the metric to be calibrated to what was achieved rather than what was needed. That is not measurement — it is post-hoc justification.

The metric defined before the build forces a conversation about what the business actually needs. It often surfaces requirements that change the design. That conversation is better to have during planning than during evaluation.

The secondary benefit: organizational alignment

When the success metric is defined and communicated before the pilot starts, it creates shared expectations across the people involved — the operations team, the finance team, and whoever will approve the next phase of automation.

A pilot that hits a pre-defined metric is not just a technical success — it is an organizational statement. The business said it wanted X and the pilot delivered X. That is the foundation for the next investment decision.

What to do if the pilot does not hit the metric

A pilot that misses its metric is not a failure — it is data. The miss usually points to one of three things: the workflow was more complex than the audit estimated, the data quality was lower than assumed, or the approval logic was not right.

Each of these is fixable. The businesses that treat a miss as a learning and iterate quickly are the ones that end up with working automation. The businesses that treat a miss as proof that AI is not ready lose the organizational window and start again from zero.

Ready to act?