The autonomy myth

The narrative around AI automation often treats human involvement as a temporary limitation — something to engineer out as models get better. In most business contexts, that framing is wrong.

Human oversight is not a limitation. It is a feature that makes automation trustworthy enough to run at scale. The businesses running the most AI automation in production are also the ones with the most structured approval workflows.

What goes wrong without an approval layer

Without approval logic, errors compound. An AI that routes a lead incorrectly creates one bad outcome. An AI that runs for two weeks routing leads incorrectly creates two weeks of bad outcomes — and no clean audit trail for recovery.

The worst AI automation failures are not dramatic. They are quiet accumulations of small errors that nobody catches until the damage is visible in a revenue report or a customer complaint spike.

Three approval models that work in practice

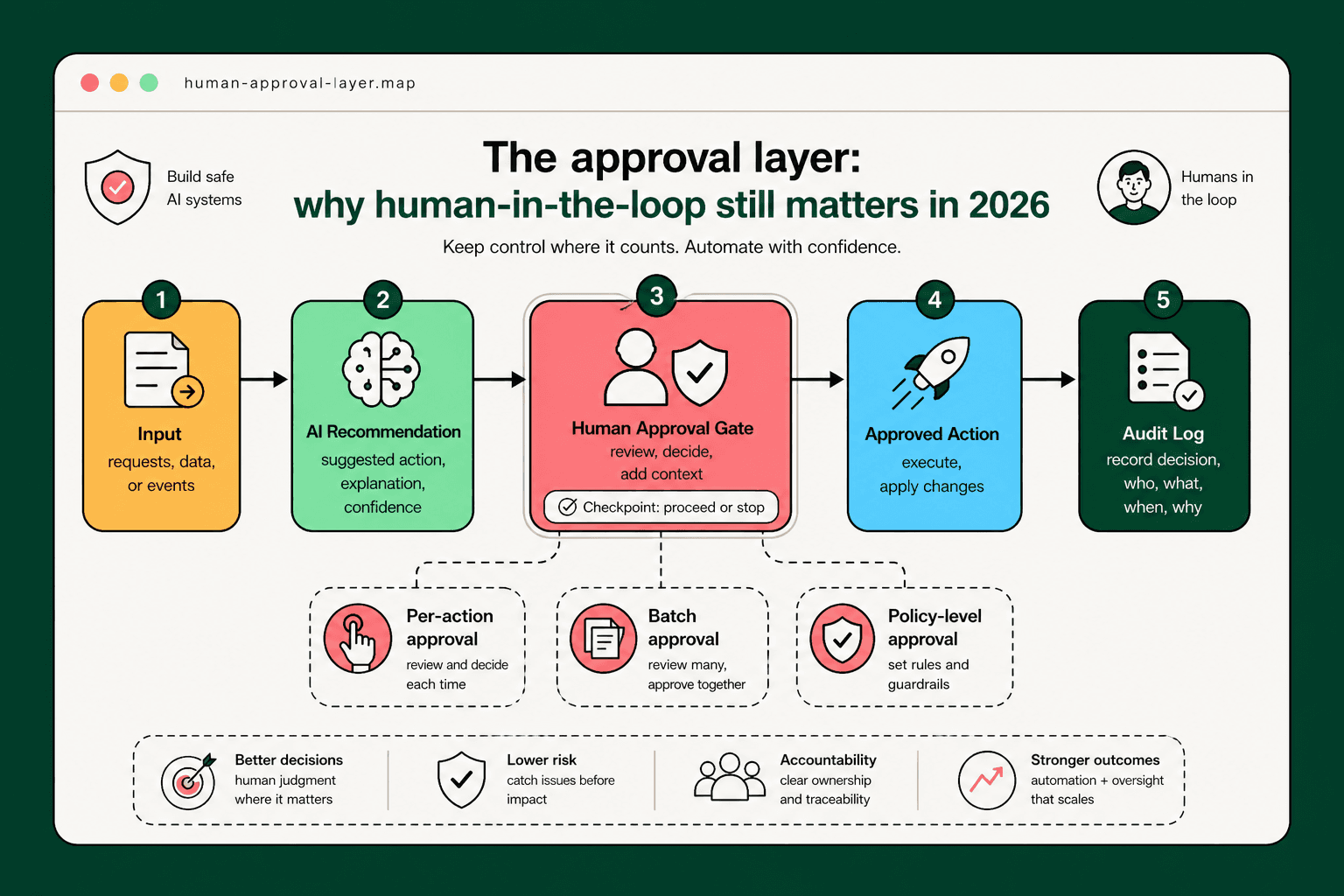

Per-action approval requires human confirmation for every automated step. It is appropriate for irreversible actions — sending a payment, deleting a record, sending a communication to a customer.

Batch approval surfaces a queue of recommended actions for a human to review and approve in bulk. It is appropriate for routine classification tasks where individual errors are low-cost and the volume makes per-action review impractical.

Policy-level approval means a human defines the rules once, and the automation executes within those rules without further review until a rule is changed. It is appropriate for well-understood, high-volume workflows with low consequence per action.

Designing approval into the system from day one

Approval workflows added after an automation is live are always harder to implement than those designed in from the start. The integration points, the notification routing, the escalation paths — these require architectural decisions, not configuration changes.

The right time to decide 'what requires human approval and why' is during the audit phase, before a line of automation logic is written.

How approval design builds organizational trust

Teams that feel like automation happens to them resist it. Teams that can see what the automation is doing, intervene when it is wrong, and adjust its behavior over time develop ownership of it.

Approval design is not just risk management. It is the mechanism that converts an AI pilot into an AI operating system that a whole team believes in.

Ready to act?